MIX10: A MATLAB TO X10 COMPILER FOR HIGH PERFORMANCE

by

Vineet Kumar

School of Computer Science

McGill University, Montréal

Monday, April 14th 2014

A THESIS SUBMITTED TO THE FACULTY OF GRADUATE STUDIES AND RESEARCH

IN PARTIAL FULfiLLMENT OF THE REQUIREMENTS FOR THE DEGREE OF

MASTER OF SCIENCE

Copyright

© 2014 Vineet Kumar

Abstract

MATLAB is a popular dynamic array-based language commonly used by students, scientists

and engineers who appreciate the interactive development style, the rich set of array operators, the

extensive builtin library, and the fact that they do not have to declare static types. Even though

these users like to program in MATLAB, their computations are often very compute-intensive

and are better suited for emerging high performance computing systems. This thesis

reports on MIX10, a source-to-source compiler that automatically translates MATLAB

programs to X10, a language designed for “Performance and Productivity at Scale";

thus, helping scientific programmers make better use of high performance computing

systems.

There is a large semantic gap between the array-based dynamically-typed nature of MATLAB

and the object-oriented, statically-typed, and high-level array abstractions of X10. This thesis

addresses the major challenges that must be overcome to produce sequential X10 code that is

competitive with state-of-the-art static compilers for MATLAB which target more conventional

imperative languages such as C and Fortran. Given that efficient basis, the thesis then provides a

translation for the MATLAB parfor construct that leverages the powerful concurrency

constructs in X10.

The MIX10 compiler has been implemented using the McLab compiler tools, is

open source, and is available both for compiler researchers and end-user MATLAB

programmers. We have used the implementation to perform many empirical measurements on

a set of 17 MATLAB benchmarks. We show that our best MIX10-generated code is

significantly faster than the de facto Mathworks’ MATLAB system, and that our results are

competitive with state-of-the-art static compilers that target C and Fortran. We also show

the importance of finding the correct approach to representing the arrays in X10, and

the necessity of an IntegerOkay analysis that determines which double variables can

be safely represented as integers. Finally, we show that our X10-based handling of

the MATLAB parfor greatly outperforms the de facto MATLAB implementation.

Résumé

MATLAB est un langage de programmation dynamique, orienté-tableaux communément

utilisé par les étudiants, les scientifiques et les ingénieurs qui apprécient son style de

développement interactif, la richesse de ses opérateurs sur les tableaux, sa librairie

impressionnante de fonctions de base et le fait qu’on aie pas à déclarer statiquement le type des

variables. Bien que ces usagers apprécient MATLAB, leurs programmes nécessitent souvent des

ressources de calcul importantes qui sont offertes par les nouveaux systèmes de haute

performance. Cette thèse fait le rapport de MIX10, un compilateur source-à-source qui fait la

traduction automatique de programmes MATLAB à X10, un langage construit pour "la

performance et la productivité à grande échelle." Ainsi, MIX10 aide les programmeurs

scientifiques à faire un meilleur usage des ressources des systèmes de calcul de haute

performance.

Il y a un écart sémantique important entre le typage dynamique et le focus sur les tableaux de

MATLAB et l’approche orientée-objet, le typage statique et les abstractions de haut niveau sur les

tableaux de X10. Cette thèse discute des défis principaux qui doivent être surmontés afin de

produire du code X10 séquentiel compétitif avec les meilleurs compilateurs statiques pour

MATLAB qui traduisent vers des langages impératifs plus conventionnels, tels que C et Fortran.

Fort de cette fondation efficace, cette thèse décrit ensuite la traduction de l’instruction parfor

de MATLAB afin d’utiliser les opérations sophistiquées de traitement concurrent de

X10.

Le compilateur MIX10 a été implémenté à l’aide de la suite d’outils de McLab, un projet

libre de droits, disponible à la communauté de recherche ainsi qu’aux utilisateurs de MATLAB.

Nous avons utilisé notre implémentation afin d’effectuer des mesures empiriques de performance

sur un jeu de 17 programmes MATLAB. Nous démontrons que le code généré par MIX10 est

considérablement plus rapide que le système MATLAB de Mathworks et que nos résultats sont

compétitifs avec les meilleurs compilateurs statiques qui produisent du code C et Fortran.

Nous montrons également l’importance d’une représentation appropriée des tableaux

en X10 et la nécessité d’une analyse IntegerOkay qui permet de déterminer quelles

variables de type réel (double) peuvent être correctement représentées par des entiers (int).

Finalement, nous montrons que notre traitement de l’instruction parfor en X10 nous

permet d’atteindre des vitesses d’exécution considérablement meilleures que dans

MATLAB.

Acknowledgements

I am thankful to my supervisor, Laurie Hendren, whose constant encouragement and guidance

not only helped me to understand compilers better, but also helped me become a better writer and

a better presenter.

I would like to give a special thanks to David Grove for suggesting this line of research and in

helping us understand key parts of the X10 language and implementation.

I am also thankful to the McLAB team for building an amazing framework for compiler

research on MATLAB. In particular, I am thankful to Anton Dubrau (M.Sc.) for helping me

understand the MCSAF and Tamer frameworks, and Xu Li (M.Sc.) for all the interesting

discussions on how to statically compile MATLAB.

Finally, I would like to thank my parents and my brother, who made it possible for me to

pursue my M.Sc. degree.

Apart from all the wonderful people around me, I am thankful to my computers, Antriksh,

Heisenberg, and cougar.cs.mcgill.ca for tirelessly and reliably crunching numbers day in and day

out.

This work was supported, in part, by the Natural Sciences and Engineering Research Council

of Canada (NSERC).

Table of Contents

List of Figures

List of Tables

Chapter 1

Introduction

___________________________________________________________________________________

MATLAB is a popular numeric programming language, used by millions of scientists,

engineers as well as students worldwide[Mol]. MATLAB programmers appreciate the high-level

matrix operators, the fact that variables and types do not need to be declared, the large number of

library and builtin functions available, and the interactive style of program development available

through the IDE and the interpreter-style read-eval-print loop. However, even though MATLAB

programmers appreciate all of the features that enable rapid prototyping, their applications are

often quite compute intensive and time consuming. These applications could perform

much more efficiently if they could be easily ported to a high performance computing

system.

X10 [IBM12], on the other hand, is an object-oriented and statically-typed language which

uses cilk-style arrays indexed by Point objects and rail-backed multidimensional arrays, and has

been designed with well-defined semantics and high performance computing in mind. The X10

compiler can generate C++ or Java code and supports various communication interfaces

including sockets and MPI for communication between nodes on a parallel computing

system.

In this thesis we present MIX10, a source-to-source compiler that helps to bridge the gap

between MATLAB, a language familiar to scientists, and X10, a language designed for high

performance computing systems. MIX10 statically compiles MATLAB programs to X10 and thus

allows scientists and engineers to write programs in MATLAB (or use old programs already

written in MATLAB) and still get the benefits of high performance computing without having to

learn a new language.Also, systems that use MATLAB for prototyping and C++ or Java for

production, can benefit from MIX10 by quickly convert- ing MATLAB prototypes to C++ or Java

programs via X10

On one hand, all the aforementioned characteristics of MATLAB make it a very user-friendly

and thus a popular application to develop software among a non-programmer community.

On the other hand, these same characteristics make MATLAB a difficult language to

compile statically. Even the de facto standard, Mathworks’ implementation of MATLAB is

essentially an interpreter with a JIT accelarator[The02] which is generally slower than

statically compiled languages. GNU Octave, which is a popular open source alternative to

MATLAB and is mostly compatible with MATLAB, introduced JIT compilation only in

its most recent release 3.8 in March 2014. Before that it was implemented only as an

interpreter[Oct]. Lack of formal language specification, unconventional semantics and closed

source make it even harder to write a compiler for MATLAB. These are some of the

challenges that MIX10 shares with other static compilers which convert MATLAB

to C or Fortran. However, targeting X10 raises some significant new challenges. For

example, X10 is an object-oriented, heap-based, language with varying levels of high-level

abstractions for arrays and iterators of arrays. An open question was whether or not it was

possible to generate X10 code that both maintains the MATLAB semantics, but also

leads to code that is as efficient as state-of-the-art translators that target C and Fortran.

Finding an effective and efficient translation from MATLAB to X10 was not obvious,

and this thesis reports on the key problems we encountered and the solutions that we

designed and implemented in our MIX10 system. By demonstrating that we can generate

sequential X10 code that is as efficient as generated C or Fortran code, we then enabled the

possibility of leveraging the high performance nature of X10’s parallel constructs. To

demonstrate this, we exposed scalable concurrency in MATLAB via X10 and examined

how to use X10 features to provide a good implementation for MATLAB’s parfor

construct.

Built on top of McLAB static analysis framework[Doh11, DH12b], MIX10, together with its

set of reusable static analyses for performance optimization and extended support for MATLAB

features, ultimately aims to provide MATLAB’s ease of use, sequential performance comparable to

that provided by state-of-the art compilers targetting C and Fortran, to support parallel constructs

like parfor and to expose scalable concurrency.

1.1 Contributions

The major contributions of this thesis are as follows:

-

Identifying key challenges:

- We have identified the key challenges in performing a

semantics-preserving efficient translation of MATLAB to X10.

-

Overall design of MIX10:

- Building upon the McLAB frontend and analysis framework,

we provide the design of the MIX10 source-to-source translator that includes a

low-level X10 IR and a template-based specialization framework for handling builtin

operations.

-

Techniques for efficient compilation of MATLAB arrays:

-

Arrays are the core of MATLAB. All data, including scalar values are represented as

arrays in MATLAB. Efficient compilation of arrays is the key for good performance.

X10 provides two types of array representations for multidimensional arrays: (1)

Cilk-styled, region-based arrays and (2) rail-backed simple arrays. We compare and

contrast these two array forms for a high performance computing language in context

of being used as a target language and provide techniques to compile MATLAB arrays

to two different representations of arrays provided by X10.

-

Builtin handling framework:

- We have designed and implemented a template-based

system that allows us to generate specialized X10 code for a collection of important

MATLAB builtin operations.

-

Code generation strategies for the sequential core:

- There are some very significant

differences between the semantics of MATLAB and X10. A key difference is that

MATLAB is dynamically-typed, whereas X10 is statically-typed. Furthermore, the

type rules are quite different, which means that the generated X10 code must include

the appropriate explicit type conversion rules, so as to match the MATLAB semantics.

Other MATLAB features, such as multiple returns from functions, a non-standard

semantics for for loops, and a very general range operator, must also be handled

correctly.

-

Code generation for concurrency in MATLAB:

- MIX10 not only supports all the key

sequential constructs but also supports concurrency constructs like parfor and

can handle vectorized instructions in a concurrent fashion. We have also introduced

X10-like concurrency constructs in MATLAB via structured comments.

-

Safe use of integer variables:

- Even after determining the correct use of X10 arrays, we

were still faced with a performance gap between the code generated by the C/Fortran

systems, and the generated X10 code generated by MIX10. Furthermore, we found

that the MIX10 system using the X10 Java backend was often generating better code

than with the X10 C++ backend, which was counter-intuitive.

We determined that a key remaining problem was overhead due to casting doubles to

integers. This casting overhead was quite high, and was substantially higher for the

C++ back-end than for the Java back-end (since the casting instructions are handled

efficiently by the Java VM). This casting problem arises because the default data type

for MATLAB variables is double - even variables used to iterate through arrays, and

to index into arrays typically have double type, even though they hold integral values.

To tackle this issue we developed an IntegerOkay analysis which determines which

double variables can be safely converted to integer variables in the generated X10

code, hence greatly reducing the number of casting operations needed.

-

Open implementation:

- We have implemented the MIX10 compiler over various

MATLAB compiler tools provided by the McLAB toolkit. In the process we also

implemented some enhancements to these existing tools. Our implementation is

extensible and allows for others to make further improvements to the generated X10

code, or to reuse the analyses to generate code for other target languages.

-

Experimental evaluation:

- We have experimented with our MIX10 implementation on

a set of benchmarks. Our results show that our generated code is almost an

order of magnitude faster than the Mathworks’ standard JIT-based system, and

is competitive with other state-of-the-art tools that generate C/Fortran. Our more

detailed results show the importance of using our customized and optimized X10

array representations, and we demonstrate the effectiveness of the IntegerOkay

analysis. Finally, we show that our X10-based treatment of parfor significantly

outperforms MATLAB on our benchmarks.

1.2 Thesis Outline

This thesis is divided into 11 chapters, including this one and is structured as follows.

Chapter 2 provides an introduction to the X10 language and describes how it compares to

MATLAB from the point of view of language design. Chapter 3 gives a description of various

existing MATLAB compiler tools upon which MIX10 is implemented, presents a high-level

design of MIX10, and explains the design and need of MIX10 IR. In Chapter 4 we compare the

two kinds of arrays provided by X10, identify when each of them must be used in the generated

code, identify and address challenges involved in efficient compilation of MATLAB arrays.

Chapter 5 describes the builtin handling framework used by MIX10 to generate specialized code

for MATLAB builtins used in the source program. Chapter 6 gives a description of

efficient and effective code generation strategies for the core sequential constructs of

MATLAB. A description of code generation for the MATLAB parfor construct is

provided in Chapter 7, which also describes our strategy to introduce concurrency

constructs in MATLAB. In Chapter 8 we provide a description of the IntegerOkay analysis

to identify variables that are safe to be declared as Long type. Chapter 9 provides

performance results for code generated using MIX10 for a suite of benchmarks. It

gives a comparison between performance achieved by MIX10 generated code and

that generated by the MATLAB coder and MC2FOR compilers. Chapter 10 provides

an overview of related work and Chapter 11 concludes and outlines possible future

work.

Chapter 2

Introduction to the X10 Programming

Language

___________________________________________________________________________________

In this chapter, we describe key X10 semantics and features to help

readers unfamiliar with X10 to have a better understanding of the MIX10

compiler.

X10 is an award winning open-source programming language being developed by IBM

Research. The goal of the X10 project is to provide a productive and scalable programming

model for the new-age high performance computing architectures ranging from multi-core

processors to clusters and supercomputers [IBM12].

X10, like Java, is a class-based, strongly-typed, garbage-collected and object-oriented

language. It uses Asynchronous Partitioned Global Address Space (APGAS) model to support

concurrency and distribution [SBP+12]. The X10 compiler has a native backend that

compiles X10 programs to C++ and a managed backend that compiles X10 programs to

Java.

2.1 Overview of X10’s Key Sequential Features

X10’s sequential core is a container-based object-oriented language that is very similar to that of

Java or C++ [SBP+12]. An X10 program consists of a collection of classes, structs or interfaces,

which are the top-level compilation units. X10’s sequential constructs like if-else statements,

for loops, while loops, switch statements, and exception handling constructs throw and

try…catch are also same as those in Java. X10 provides both, implicit coercions and explicit

conversions on types, and both can be defined on user-defined types. The as operator is used to

perform explicit type conversions; for example, x as Long{self != 0} converts x to type

Long and throws a runtime exception if its value is zero. Multi-dimensional arrays in X10

are provided as user-defined abstractions on top of x10.lang.Rail, an intrinsic

one-dimensional array analogous to one-dimensional arrays in languages like C or Java. Two

families of multi-dimensional array abstractions are provided: simple arrays, which

provide a restricted but efficient implementation, and region arrays which provide a

flexible and dynamic implementation but are not as efficient as simple arrays. Listing 2.1

shows a sequential X10 program that calculates the value of π using the Monte Carlo

method.

It highlights important sequential and object-oriented features of X10 detailed in the following

subsections. It reads an argument from the console in an intrinsic 1 dimensions array(Rail) of

String and converts it to a Long value N. It then generates two random numbers and performs

computations on them in a loop from 1 to N. Finally, it calculates the value of π and displays the

result on the console.

X10’s syntax is similar to that of Java. The object-oriented semantics are also similar to that

of Java. Mutable and immutable variables are denoted by keywords var and val

respectively. for loops can iterate over a range of values. For e.g. in listing 2.1, the for loop

iterates over a LongRange of 1 to N. A LongRange represents a range of Long type

integers.

import x10.util.Random; public class SeqPi { public static def main(args:Rail[String]) { val N = Long.parse(args(0)); var result:Double = 0; val rand = new Random(); for(1..N) { val x = rand.nextDouble(); val y = rand.nextDouble(); if(x*x + y*y <= 1) result++; } val pi = 4*result/N; Console.OUT.println(~The value of pi is ~ + pi); } }

Listing 2.1:

Sequential

X10

program

to

calculate

value

of

π

using

Monte

Carlo

method

2.1.1 Object-oriented Features

A program consists of a collection of top-level units, where a unit is either a class, a struct or an

interface. A program can contain multiple units, however only one unit can be made public and

its name must be same as that of the program file. Similar to Java, access to these top-level units is

controlled by packages. Below is a description of the core object-oriented constructs in

X10:

-

Class

- A class is a basic bundle of data and code. It consists of zero or more members

namely fields, methods, constructors, and member classes and interfaces [IBM13b].

It also specifies the name of its superclass, if any and of the interfaces it implements.

-

Fields

- A field is a data item that belongs to a class. It can be mutable (specified by the

keyword var) or immutable (specified by the keyword val). The type of a mutable

field must be always be specified, however the type of an immutable field may

be omitted if it’s declaration specifies an initializer. Fields are by default instance

fields unless marked with the static keyword. Instance fields are inherited by

subclasses, however subclasses can shadow inherited fields, in which case the value

of the shadowed field can be accessed by using the qualifier super.

-

Methods

- A method is a named piece of code that takes zero or more parameters and

returns zero or one values. The type of a method is the type of the return value or

void if it does not return a value. If the return type of a method is not provided by

the programmer, X10 infers it as the least upper bound of the types of all expressions

e in the method where the body of the method contains the statement return e.

A method may have a type parameter that makes it type generic. An optional method

guard can be used to specify constraints. All methods in a class must have a unique

signature which consists of its name and types of its arguments.

Methods may be inherited. Methods defined in the superclass are available in

the subclasses, unless overridden by another method with same signature. Method

overloading allows programmers to define multiple methods with same name as

long as they have different signatures. Methods can be access-controlled to be

private, protected or public; private methods can only be accessed by

other methods in the same class, protected methods can be accessed in the same

class or its subclasses, and public methods can be accessed from any code. By

default, all methods are package protected which means they can be accessed from

any code in the same package.

Methods with the same name as that of the containing class are called constructors.

They are used to instantiate a class.

-

Structs

- A struct is just like a class, except that it does not support inheritance and may

not be recursive. This allows structs to be implemented as header-less objects, which

means that unlike a class, a struct can be represented by only as much memory as

is necessary to represent its fields and with its methods compiled to static methods.

It does not contain a header that contains data to represent meta-information about

the object. The current version of X10 (version 2.4) does not support mutability and

references to structs, which means that there is no syntax to update the fields of a

struct and structs are always passed by value.

-

Function literals

- X10 supports first-class functions. A function consists of a parameter

list, followed optionally by a return type, followed by =>, followed by the

body (an expression). For example, (i:Int, j:Int) => (i<j ? foo(i)

: foo(j)), is a function that takes parameters i and j and returns foo(i) if i<j

and foo(j) otherwise. A function can access immutable variables defined outside

the body.

2.1.2 Statements

X10 provides all the standard statements similar to Java. Assignment, if - else and while

loop statements are identical to those in Java.

for loops in X10 are more advanced and apart from the standard C-like for loop, X10

provides three different kinds of for loops:

-

- enhanced for loops take an index specifier of the form i in r, where r is any value that

implements x10.lang.Iterable[T] for some type T. Code listing 2.2 below shows

an example of this kind of for loops:

def sum(a:Rail[Long]):Long{ var result:Long = 0; for (i in a){ result += i; } return result; }

Listing 2.2:

Example

of

enhanced

for

loop

-

- for loops over LongRange iterate over all the values enumerated by a LongRange(a range

of consecutive Long type values). A LongRange is instantiated by an expression like

e1..e2 and enumerates all the integer values from a to b (inclusive) where e1 evaluates

to a and e2 evaluates to b. Listing 2.3 below shows an example of a for loop that uses

LongRange:

def sum(N:Long):Long{ var result:Long = 0; for (i in 0..N){ result += i; } return result; }

Listing 2.3:

Example

of

for

loop

over

LongRange

-

- for loops over Region allow to iterate over multiple dimensions simultaneously. A Region

is a data structure that represents a set of points in multiple dimensions. For instance, a

Region instantiated by the expression Region.make(0..5,1..6) creates a

2-dimensional region of points (x,y) where x ranges over 0..5 and y over

1..6. The natural order of iteration is lexicographic. Listing 2.4 below shows an

example that calculates the sum of coordinates of all points in a given rectangle:

def sum(M:Long, N:Long):Long{ var result:Long = 0; val R:Region = x10.regionarray.Region.make(0..M,0..N); for ([x,y] in R){ result += x+y; } return result; }

Listing 2.4:

Example

of

for

loop

over

a

2-D

Region

2.1.3 Arrays

In order to understand the challenges of translating MATLAB to X10, one must understand the

different flavours and functionality of X10 arrays.

At the lowest level of abstraction, X10 provides an intrinsic one-dimensional fixed-size array

called Rail which is indexed by a Long type value starting at 0. This is the X10 counterpart of

built-in arrays in languages like C or Java. In addition, X10 provides two types of more

sophisticated array abstractions in packages, x10.array and x10.regionarray.

-

Rail-backed Simple arrays

- are a high-performance abstraction for multidimensional

arrays in X10 that support only rectangular dense arrays with zero-based indexing.

Also, they support only up to three dimensions (specified statically) and row-major

ordering. These restrictions allow effective optimizations on indexing operations on

the underlying Rail. Essentially, these multidimensional arrays map to a Rail of

size equal to number of elements in the array, in a row-major order.

-

Region arrays

- are much more flexible. A region is a set of points of the same rank, where

Points are the indexing units for arrays. Points are represented as n-dimensional

tuples of integer values. The rank of a point defines the dimensionality of the array

it indexes. The rank of a region is the rank of its underlying points. Regions provide

flexibility of shape and indexing. Region arrays are just a set of elements with each

element mapped to a unique point in the underlying region. The dynamicity of these

arrays come at the cost of performance.

Both types of arrays also support distribution across places. A place is one of the central innovations

in X10, which permits the programmer to deal with notions of locality. places are discussed in

more detail in section 2.2.4

2.1.4 Types

X10 is a statically type-checked language: Every variable and expression has a type that is known

at compile-time and the compiler checks that the operations performed on an expression are

permitted by the type of that expression. The name c of a class or an interface is the most basic

form of type in X10. There are no primitive types.

X10 also allows type definitions, that allow a simple name to be supplied for a complicated

type, and for type aliases to be defined. For example, a type definition like public static

type bool(b:Boolean) = Boolean{self=b} allows the use of expression

bool(true) as a shorthand for type Boolean{self=true}.

-

Generic types

- X10’s generic types allow classes and interfaces to be declared parameterized by

types. They allow the code for a class to be instantiated unbounded number of times, for

different concrete types, in a type-safe fashion. For instance, the listing 2.5 below shows a

class List[T], parameterized by type T, that can be replaced by a concrete type like Int

at the time of instantiation (var l:List[Int] = new List[Int](item)).

class List[T]{ var item:T; var tail:List[T]=null; def this(t:T){ item=t; } }

X10 types are available at runtime, unlike Java(which erases them).

-

Constrained types

- X10 allows the programmer to define Boolean expressions (restricted)

constraints on a type [T]. For example, a variable of constrained type Long{self != 0}

is of type Long and has a constraint that it can hold a value only if it is not equal to 0 and

throws a runtime error if the constraint is not satisfied. The permitted constraints include the

predicates == and !=. These predicates may be applied to constraint terms. A constraint

term is either a final variable visible at the point of definition of the constraint, or the special

variable self or of the form t.f where f names a field, and t is (recursively) a constraint

term.

2.2 Overview of X10’s Concurrency Features

X10 is a high performance language that aims at providing productivity to the programmer. To

achieve that goal, it provides a simple yet powerful concurrency model that provides four

concurrency constructs that abstract away the low-level details of parallel programming from the

programmer, without compromising on performance. X10’s concurrency model is based on the

Asynchronous Partitioned Global Address Space (APGAS) model [IBM13a]. The APGAS model

has a concept of global address space that allows a task in X10 to refer to any object (local or

remote). However, a task may operate only on an object that resides in its partition of the address

space (local memory). Each task, called an activity, runs asynchronously parallel to each other. A

logical processing unit in X10 is called a place. Each place can run multiple activities. There are

four types of concurrency constructs provided by X10 [IBM13b], as described in the following

sections:

2.2.1 Async

The fundamental concurrency construct in X10 is async. The statement async S creates a new

activity to execute S and returns immediately. The current activity and the “forked" activity

execute asynchronously parallel to each other and have access to the same heap of objects as the

current activity. They communicate with each other by reading and writing shared variables.

There is no restriction on statement S in the sense that it can contain any other constructs

(including async). S is also permitted to refer to any immutable variable defined in lexically

enclosing scope.

An activty is the fundamental unit of execution in X10. It may be thought of as a very

light-weight thread of execution. Each activity has its own control stack and may invoke recursive

method calls. Unlike Java threads, activities in X10 are unnamed. Activities cannot be aborted or

interrupted once they are in flight. They must proceed to completion, either finishing

correctly or by throwing an exception. An activity created by async S is said to be

locally terminated if S has terminated. It is said to be globally terminated if it has

terminated locally and all activities spawned by it recursively, have themselves globally

terminated.

2.2.2 Finish

Global termination of an activity can be converted to local termination by using the finish

construct. This is necessary when the programmer needs to be sure that a statement S and all

the activities spawned transitively by S have terminated before execution of the next

statement begins. For instance in the listing 2.5 below, the use of finish ensures

that the Console.OUT.println(~a(1) = ~ + a(1)); statement is executed

only after all the asynchronously executing operations (async a(i) *= 2; have

completed.

//... //Create a Rail of size 10, with i’th element initialized to i val a:Rail[Long] = new Rail[Long](10,(i:Long)=>i); finish for (i in 0..9){ //asynchronously double every value in the Rail async a(i) *= 2; } Console.OUT.println(~a(1) = ~ + a(1)); //...

Listing 2.5:

Example

use

of

finish

construct

2.2.3 Atomic

The statement atomic S ensures that the statement S is executed in a single step with respect to

all other activities in the system. When S is being executed in one activity all other activities

containing s are suspended. However, the atomic statement S must be sequential (it should

not contain an async or finish staement), non-blocking (it should not contain a

blocking construct like when, explained below) and local (in this context, local means

place-local, that is, it must not contain an at construct, explained in Sec. 2.2.4). Consider

the code fragment in listing 2.6. It asynchronously adds Long values to a linked-list

list and simultaneously holds the size of the list in a variable size. The use of

atomic guarantees that no other operation, in any activity, is executed in between (or

simultaneously with) these two operations, which is necessary to ensure correctness of the

program.

//... finish for (i in 0..10){ async add(i); } //... def add(x:Long){ atomic { this.list.add(x); this.size = this.size + 1; } } //...

Listing 2.6:

Example

use

of

atomic

construct

Note that, atomic is a syntactic sugar for the construct when (c) , which is the conditional

atomic statement based on binary condition (c). Statement when (c) S executes statement S

atomically only when c evaluates to true; if it is false, the execution blocks waiting for c to be

true. Condition c must be sequential, non-blocking and local.

2.2.4 At

A place in X10 is the fundamental processing unit. It is a collection of data and activities that

operate on that data. A program is run on a fixed number of places. The binding of places to

hardware resources (e.g. nodes in a cluster, accelerators) is provided externally by a configuration

file, independent of the program.

The at construct provides a place-shifting operation, that is used to force execution of a

statement or an expression at a particular place. An activity executing at (p) S suspends

execution at the current place; The object graph G at the current place whose roots are all the

variables V used in S is serialized, and transmitted to place p, deserialized (creating a

graph G′ isomorphic to G), an environment is created with the variables V bound to the

corresponding roots in G′, and S executed at p in this environment. On local termination of S,

computation resumes after at (p) S in the original location. The object graph is

not automatically transferred back to the originating place when S terminates: any

updates made to objects copied by an at will not be reflected in the original object

graph.

2.3 Overview of X10’s Implementation and Runtime

In order to understand the compilation flow of the MIX10 compiler and enhancements made to

the X10 compiler for efficient use of X10 as a target language for MATLAB, it is important to

understand the design of the X10 compiler and its runtime environment.

2.3.1 X10 Implementation

X10 is implemented as a source-to-source compiler that translates X10 programs to

either C++ or Java. This allows X10 to achieve critical portability, performance and

interoperability objectives. The generated C++ or Java program is, in turn, compiled by

the platform C++ compiler to an executable or to class files by a Java compiler. The

C++ backend is referred to as Native X10 and the Java backend is called Managed

X10.

The source-to-source compilation approach in X10 provides three main advantages: (1) It

makes X10 available for a wide range of platforms; (2) It takes advantage of the underlying

classical and platform-specific optimizations in C++ or Java compilers, while the X10

implementation includes only X10 specific optimizations; and (3) It allows programmers to take

advantage of the existing C++ and Java libraries.

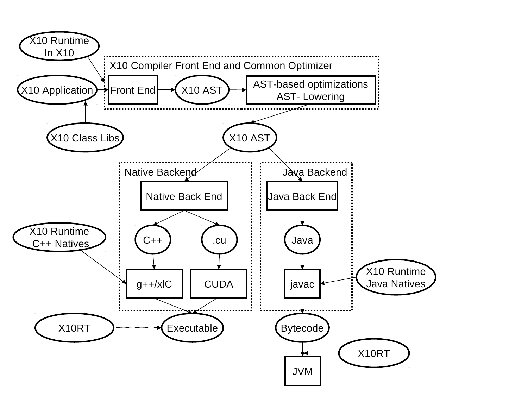

Figure 2.1 shows the overall architecture of the X10 compiler [IBM13b].

2.3.2 X10 Runtime

Figure 2.2 shows the major components of the X10 runtime and their relative hierarchy [IBM13b].

The runtime bridges the gap between application program and the low-level network transport

system and the operating system. X10RT, which is the lowest layer of the X10 runtime,

provides abstraction and unification of the functionalities provided by various network

layers.

The X10 Language Native Runtime provides implementation of the sequential

core of the language. It is implemented in C++ for native X10 and Java for Managed

X10.

XRX Runtime, the X10 runtime in X10 is the core of the X10 runtime system. It provides

implementation for the primitive X10 constructs for concurrency and distribution (async,

finish, atomic and at). It is primarily written in X10 over a set of low-level APIs that

provide a platform-independent view of processes, threads, synchronization mechanisms and

inter-process communication.

At the top of the X10 runtime system, is a set of core class libraries that provide fundamental

data types, basic collections, and key APIs for concurrency and distribution.

Chapter 3

Background and High-level Design of MIX10

___________________________________________________________________________________

3.1 Background

MIX10 is implemented on top of several existing MATLAB compiler tools. The overall structure

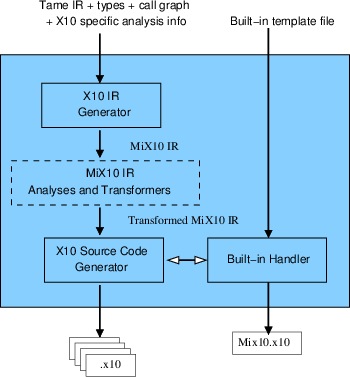

is given in Figure 3.1, where the new parts are indicated by the shaded boxes, and future work is

indicated by dashed boxes.

As illustrated at the top of the figure, a MATLAB programmer only needs to provide an

entry-point MATLAB function (called myprog.m in this example), plus a collection of other

MATLAB functions and libraries (directories of functions) which may be called, directly or

indirectly, by the entry point. The programmer may also specify the types and/or shapes of the

input parameters to the entry-point function. As shown at the bottom of the figure, our MIX10

compiler automatically produces a collection of X10 output files which contain the generated

X10 code for all reachable MATLAB functions, plus one X10 file called mix10.x10 which

contains generated and specialized X10 code for the required builtin MATLAB functions. Thus,

from the MATLAB programmer’s point of view, the MIX10 compiler is quite simple to

use.

MATLAB is actually quite a complicated language to compile, starting with its rather unusual

syntax, which cannot be parsed with standard LALR techniques. There are several issues that

must be dealt with including distinguishing places where white space and new line characters

have syntactic meaning, and filling in missing end keywords, which are sometimes

optional. The McLAB front-end handles the parsing of MATLAB through a two step

process. There is a pre-processing step which translates MATLAB programs to a cleaner

subset, called Natlab, which has a grammar that can be expressed cleanly for a LALR

parser. The McLAB front-end delivers a high-level AST based on this cleaner grammar.

After parsing, the next major phase of MIX10 uses the MCSAF framework [DH12a, Doh11]

to disambiguate identifiers using kind analysis [DHR11], which determines if an identifier refers

to a variable or a named function. This is required because the syntax of MATLAB does not

distinguish between variables and functions. For example, the expression a(i) could refer to four

different computations, a could be an array or a function, and i could refer to the builtin function

for the imaginary value i, or it could refer to a variable i. The MCSAF framework also

simplifies the AST, producing a lower-level AST which is more amenable to subsequent

analysis.

The next major phase is the Tamer [DH12b], which is a key component for any tool which

statically compiles MATLAB. The Tamer generates an even more defined AST called Tamer IR, as

well as performing key interprocedural analyses to determine both the call graph and an estimate

of the base type and shape of each variable, at each program point. The call graph is needed to

determine which files (functions) need to be compiled, and the type and shape information is very

important for generating reasonable code when the target language is statically typed, as is the

case for X10.

The Tamer also provides an extensible interprocedural value analysis and an interprocedural

analysis framework that extends the intraprocedural framework provided by MCSAF. Any static

backend will use the standard results of the Tamer, but is also likely to implement some

target-language-specific analyses which estimate properties useful for generating code in a

specific target language. Currently, we have implemented two analyses : (1) An analysis for

determining if a MATLAB variable is real or complex to enable support for complex numbers in

MIX10 and other MATLAB compilers based on McLAB; and (2) IntegerOkay analysis to identify

which variables can be safely declared to be of an integer type (Int or Long) instead of the

default type Double.

For the purposes of MIX10, the output of the Tamer is a low-level, well-structured

AST, which along with key analysis information about the call graph, the types and

shapes of variables, and X10-specific information. These Tamer outputs are provided to

the code generator, which generates X10 code, and which is the main focus of this

thesis.

The X10 source code generator actually gets inputs from two places. It uses the Tamer IR it

receives from the the Tamer to drive the code generation, but for expressions referring to

built-in MATLAB functions it interacts with the Built-in Handler which used the built-in

template file we provide. We describe the functioning of the built-in handler in Chapter

5.

Chapter 4 concentrates on generating efficient code for MATLAB arrays. Chapter 6

describes the code generation strategy for the sequential core of MATLAB, while Chapter 7

describes our strategy to generate parallel X10 code for MATLAB parfor construct, and

introducing X10 like concurrency constructs in MATLAB. The focus of this thesis is to

address challenges in generating efficient X10 code whose performance is comparable to

state-of-the-art tools that generate more traditional imperative languages like C and

Fortran.

3.2 High-level Design of the MIX10 Compiler

The MIX10 code generator is the key component which makes the translation from the Tamer IR,

which is based on MATLAB programming constructs and semantics, to X10. The overall structure

of the MIX10 code generator is given in Figure 3.2.

The input to to MIX10 compiler is the call graph generated by Tamer and the Tame IR

annotated with the necessary analysis information like shape, iscomplex, and type and

IntegerOkay. Rather than do a direct code generation to X10 source code, MIX10 translates the

Tamer IR to MIX10 IR, a general and extensible IR, designed by us, to represent X10. This

translation is done by the X10 IR generator module of MIX10. Finally, with the inputs from the

builtin handler and the X10 IR generator (after the X10 specific analyses and transformations

have been done), the X10 source code generator generates the resultatnt X10 source

code.

3.2.1 The MIX10 Intermediate Representation

The MIX10 IR is a low-level, three address like intermediate representation that is similar in

design to the Tamer IR and abstracts the X10 constructs while capturing the static

information required to generate the X10 source code. We have implemented the IR using

JastAdd [EH04, jas], which allows us to easily add new AST nodes by simply extending the

JastAdd specification grammar.

There are three important reasons to use an IR, instead of directly generating the X10

code:

- There are potentially two places that optimizations and transformations may happen:

either at the Tamer IR level or at the MIX10 IR level. It is our intent to put

any analysis or transformation that is not X10-specific into the Tamer IR, so that

other back-ends can benefit from those improvements. However, optimizations and

transformations that are specific to X10 programming constructs (such as points and

regions) and semantics will need to be done on the MIX10 IR. Although we currently

do not transform the MIX10 IR very much, the ultimate goal is to support a variety

of analyses and transformations that can be used to: (1) produce more efficient X10

code, and (2) produce more readable X10 code.

- There are X10 constructs that can be pretty printed to be of different kinds,

for example the X10 arrays, which can either be simple arrays, region arrays or

specialized region arrays, and X10 for loop which can either be C-like for loop

or an iterator over a LongRange (used in generated code for parfor loop). It

allows to abstract the vital information for a construct while leaving the actual syntax

to the source code generator. This makes it easier to add X10 specific analyses

and transformations, and makes it easy to update the compiler whenever a new or

improved variation of a construct is added.

- MIX10 IR can also be used as a convenient place to insert instrumentation code for

the generated X10 code.

Appendix B provides the JastAdd implementation of the MIX10 IR grammar.

Chapter 4

Techniques for Efficient Compilation of

MATLAB Arrays

___________________________________________________________________________________

Arrays are the core of the MATLAB programming language. Every value in MATLAB

is a Matrix and has an associated array shape. Even scalar values are represented as

1×1 arrays. Most of the data read and write operations involve accessing individual or

a set of array elements. Given the central role of arrays in MATLAB, it is of utmost

importance for our MIX10 compiler to find effective and efficient translations to X10

arrays.

Given the shape information provided by the shape analysis engine [LH14] built

into the McLAB analysis framework [DH12a, Doh11, DH12b], it was not hard to

compile MATLAB arrays to X10. However, to generate X10 code whose performance

would be competitive to the generated C code (via MATLAB coder) and the generated

Fortran code (via the MC2FOR compiler), was not straightforward, and required deeper

understanding of the X10 array system and careful handling of several features of the MATLAB

arrays.

4.1 Simple Arrays or Region Arrays

As described in Section 2.1.3, X10 provides two higher level abstractions for arrays, simple

arrays, a high performance but rigid abstraction, and region arrays, a flexible abstraction but not as

efficient as the simple arrays. In order to achieve more efficiency, our strategy is to use the simple

arrays whenever possible, and to fall back to the region arrays when necessary. Note that it is

possible to force the MIX10 compiler to use region arrays via a switch, for experimentation

purposes.

4.1.1 Compilation to Simple Arrays

In dealing with the simple rail-backed arrays, there were two important challenges. First, we

needed to determine when it is safe to use the simple rail-backed arrays, and second, we needed

an implementation of simple rail-backed arrays that handles the column-major, 1-indexing, and

linearization operations required by MATLAB.

When to use simple rail-backed arrays:

After the shape analysis of the source MATLAB program, if shapes of all the arrays in the

program: (1) are known statically, (2) are supported by the X10 implementation of simple arrays

and (3) the dimensionality of the shapes remain same at all points in the program; then MIX10

generates X10 code that uses simple arrays.

Column-major indexing:

In order to make X10 simple arrays more compatible with MATLAB, we modified the implementation

of the Array_2 and Array_3 classes in x10.array package to use column-major ordering instead

of the default row-major ordering when linearizing multidimensional arrays to the backing Rail

storage.http://www.sable.mcgill.ca/mclab/mix10/x10_update/

Since MATLAB uses column-major ordering to linearize arrays, this modification

also makes it trivial to support linear indexing operations in MATLAB.

http://www.mathworks.com/help/matlab/math/matrix-indexing.html

MATLAB naturally supports linear indexing for individual element access. More precisely, if the

number of subscripts in an array access is less than the number of dimensions of the array, the last

subscript is linearly indexed over the remaining number of dimensions in a column-major fashion.

Our modification to use column-major ordering for the backing Rail make it easier and more

efficient to support linear indexing by allowing direct access to the underlying Rail at the

calculated linear offset.

Given that we can determine when it is safe to use the simple rail-backed arrays,

and our improved X10 implementation of them, we then designed the appropriate

translations from MATLAB to X10, for array construction, array accesses for both individual

elements and ranges. Given the number of dimensions and the size of each dimension, it is

easy to construct a simple array. For example a two-dimensional array A of type T and

shape m×n can be constructed using a statement like val A:Array_2[T] = new

Array_2[T](m,n);. Additional arguments can be passed to the constructor to initialize the

array. Another important thing to note is that MATLAB allows the use of keyword end or an

expression involving end (like end-1) as a subscript. end denotes the highest index

in that dimension. If the highest index is not known the numElems_i property of

the simple arrays is used to get the number of elements in the ith dimension of the

array.

4.1.2 Compilation to Region Arrays

With MATLAB’s dynamic nature and unconventional semantics, it is not always possible to

statically determine the shape of an arrays accurately. Luckily, with some thought to

a proper translation, X10’s region arrays are flexible enough to support MATLAB’s

“wild" arrays. Also, since Point objects can be a set of arbitrary integers, there is no

restriction on the starting index of the arrays. Region arrays can easily use one-based

indexing.

Array construction:

Array construction for region arrays involves creating a region over a set of points (or index

objects) and assigning it to an array. Regions of arbitrary ranks can be created dynamically. For

example, consider the following MATLAB code snippet:

function[x] = foo(a) t = bar(a); x = t; ... end

function[y] = bar(a) if (a == 3) y = zeros(a,a+1,a+2,a+3); else y = zeros(a,a+1,a+2); end end

In this code, the number of dimensions of array t and hence array x cannot be determined

statically at compile-time. In such case, it is not possible to generate X10 code that uses

simple arrays, however, it can still be compiled to the following X10 code for function

foo().

static def foo(a: Double){ val t: Array[Double] = new Array[Double](bar(a)); val x: Array[Double] = new Array[Double](t); ... return x; }

static def bar(a:Double){ var y:Array[Double]=null; if (a == 3) { y = new Array[Double] (Mix10.zeros(a,a+1,a+2,a+3)); } else { y = new Array[Double] (Mix10.zeros(a,a+1,a+2)); } return y; }

In this generated X10 code, t is an array of type Double which can be created by copying

from another array returned by bar(a) without knowing the shape of the returned

array.

Array access:

Subscripting operations to access individual elements are mapped to X10’s region array

subscripting operation. If the rank of array is 4 or less, it is subscripted directly by integers

corresponding to subscripts in MATLAB otherwise we create a Point object from these integer

values and use it to subscript the array. In case an expression involving end is used for indexing

and the complete shape information is not available, method max(Long i), provided by the

Region class is used, allowing to determine the highest index for a particular dimension at

runtime.

Rank specialization:

Although region arrays can be used with minimal compile-time information, providing

additional static information can improve performance of the resultant code by eliminating

run-time checks involving provided information. One of the key specializations that we

introduced with use of region arrays is to specify the rank of an array in its declaration, whenever

it is known statically. For example if rank of an array A of type T is known to be two, it can be

declared as val A:Array[T](2);. This specialization provided better performance

compared to unspecialized code as shown in section 9.6.

4.2 Handling the Colon Expression

MATLAB allows the use of an expression such as a:b (or colon(a,b)) to create a vector of

integers [a, a+1, a+2, ... b]. In another form, an expression like a:i:b can be used to

specify an integer interval of size i between the elements of the resulting vector. Use of a

colon expression for array subscripting takes all the elements of the array for which the

subscript in a particular dimension is in the vector created by the colon expression in that

dimension.

Consider the following MATLAB code:

function [x] = crazyArray(a) y = ones(3,4,5); x = y(1,2:3,:); end

Here y is a three-dimensional array of shape 3×4×5 and x is a sub-array of y of shape 1×2×5.

Such array accesses can be handled by simply calling the getSubArray[T]() that we have

implemented in a Helper class provided with the generated code. The generated X10 code with

simple array for this example is as follows:

static def crazyArray (a: Double){ val y: Array_3[Double] = new Array_3[Double](Mix10.ones(3, 4, 5)); val mc_t0: Array_1[Double] = new Array_1[Double](Mix10.colon(2, 3)); var x: Array_3[Double]; x = new Array_3[Double](Helper.getSubArray(1, 1, mc_t0(0), mc_t0(1), 1, 5, y)) ; return x; }

The colon operator can also be used on the left hand side for an array set operation that

updates multiple values of the array. For example, in the MATLAB statement x(:,4) = y;, all

the values of the fourth column of x will be set to y if y is a scalar or to corresponding values of y

if y is a column vector with length equal to the size of first dimension of x. To handle this kind of

operation we have implemented another helper method, setSubArray(). This method takes as

input, the target array, the bounds on each dimension, and the source array. x(:,4) = y; will be

translated by MIX10 to x = Helper.setSubArray(x, 1, x.numElems_1, 4, 4,

y);

We have implemented overloaded versions of the getSubArray() and the

setSubArray() methods for arrays of different dimensions. For region arrays, we provide the

same methods that operate on region arrays in a different version of the Helper class.

MIX10 provides the correct version of the Helper class, based on what kind of arrays are

used.

4.3 Array Growth

MATLAB allows explicit array growth during runtime via the horzcat() and the vertcat()

builtin functions for array concatenation operations. In MIX10 this feature is supported for

simple arrays as long as the array growth does not change the number of dimensions of the array.

For region arrays, this feature is supported in full. For simple arrays, X10 allows a variable

declared to be an array of rank i, to hold any array value of the same rank. For example, consider

the following set of statements:

//... var x:Array_2[Long]; x = new Array_2(3,4,0); y = new Array_2(3,5,0); x=y; //...

Here, x is defined to be of type Array_2[Long] and can hold arrays of different sizes at

different points in the program.

Region arrays, being more dynamic, also support array growth even if it changes the rank of

the array. For example, the following set of statements are valid in an X10 program that uses

region arrays:

//... var x:Array[Long]; x = new Array(Region.make(1..3,1..4),0); y = new Array(Region.make(1..3,1..5,1..6),1); x=y; //...

Here x is a 2-dimensional array and y is a 3-dimensional array.

Section 9.6 discusses the performance results obtained by using different kinds of arrays and

provides a comparison of them, thus showing the efficiency of our approach for compiling

MATLAB arrays to X10.

Chapter 5

Handling MATLAB Builtins

___________________________________________________________________________________

MATLAB builtin methods are the core of the language and one of the features that make it

popular among scientists. They provide a huge set of commonly used numerical functions. All the

operators, including the standard binary operators (+, -, *,/), comparison operators

(<, >, <=, >=, ==) and logical operators (&, &&, |, ||) are merely syntactic sugar for

corresponding builtin methods that take the operands as arguments. For example an expression

like a+b is actually implemented as plus(a,b). An important thing to note is that unlike most

programming languages, all the MATLAB builtin methods by default operate on matrix values as a

whole. For example a*b or mtimes(a,b) actually performs matrix multiplication on matrix

values a and b. However, most of the builtin methods also accept one or more scalar, or more

accurately, 1×1 matrix arguments. Builtin methods are overloaded to accept almost all

possible shapes of arguments. Thus mtimes(a,b) can have both a and b as matrix

arguments (including 1×1 matrices) with number of columns in a equal to number

of rows in b, in which case the result is a matrix multiplication of a and b or one of

them can be a 1×1 matrix and other can be a matrix of any size and the result is a

matrix containing each element of the non-scalar argument times the scalar argument.

Wherever possible, MATLAB builtins also support complex numerical values. X10 on

the other hand, like most of the programming languages operates on scalar values by

default.

Due to the fact that X10 is still new and evolving, it has a very limited set of libraries,

specially to support a large subset of available MATLAB builtin methods. The X10 Global Matrix

Library (GML) supports double-precision matrix operations however it is still not as extensive as

MATLAB’s set of operations and it poses some restrictions:

- It works on values of type Matrix instead of X10 type Array which means it

needs explicit conversion of Array values to Matrix values before performing a

matrix operation and and then a conversion of the results back to Array type. This

conversion may be a large overhead, especially for small data sizes.

- GML is limited to Matrix values of two dimensions and containing elements of type

Double, whereas many MATLAB builtin methods support values of greater number

of dimensions.

- GML currently does not support complex numerical values whereas MATLAB

naturally supports them.

- Currently GML requires a separate installation and configuration which is non-trivial

specially for scientists who need something that works out of the box.

Due to above restrictions, X10 Global Matrix Library is useful in some situations, for

example when there is a matrix multiplication of a very large data size, but cannot be used or is

not a good choice for a large number of operations.

For a language with open-sourced libraries, it would be possible to actually compile the

library methods to X10. However, many MATLAB libraries are closed source and thus it is not

possible to translate them to X10.

5.0.1 The MIX10 Builtin Support Framework

We decided to write our own X10 implementations of the commonly used MATLAB

builtin methods. Currently we have implemented only those methods that are used in our

benchmarks. In this thesis, we concentrate on how these methods are included in the

generated X10 code with minimal loss of readability and performance rather than the actual

implementation.

The code below shows the X10 code for the MATLAB builtin method plus(a,b) involving

2-dimensional simple arrays.

public static def plus(a: Array_2[Double], b:Array_2[Double]){ val x = new Array_2[Double](a.numElems_1, a.numElems_2); for (p in a.indices()){ x(p) = a(p)+ b(p); } return x; } public static def plus(a:Double, b:Array_2[Double]){ val x = new Array_2[Double](b.numElems_1, b.numElems_2); for (p in b.indices()){ x(p) = a+ b(p); } return x; } public static def plus(a:Array_2[Double], b:Double){ val x = new Array_2[Double](a.numElems_1, a.numElems_2); for (p in a.indices()){ x(p) = a(p)+ b; } return x; } public static def plus(a:Double, b:Double){ val x: Double; x = a+b; return x; }

This X10 code contains four overloaded versions (and it still does not contain methods to

support complex values, variables of types other than Double, simple arrays of other dimensions,

and region arrays) based on whether the arguments are scalar or Array_2 and their relative

position in the list of arguments.

Including all the overloaded versions and versions specialized for arrays of different

dimensions or region arrays, in the generated X10 code would result in lot of lookup overhead,

would require producing redundant code (versions of methods with arguments of similar shape

but different types will have the same algorithm) and would generate large code with less

readability. Instead we designed a specialization technique that selects the appropriate

versions of only the methods used in the source MATLAB program. Note that the use of

generic types to handle arguments of different types is not always a good idea, since

several builtin implementations involve calls to X10 library functions which are not

defined on generic types. For example, functions in the x10.lang.Math librarry like

floor(Double a), max(Double a, Double b), etc. do not take generic type

arguments.

After studying numerous builtin methods we categorized them into the following five

categories:

-

Type 1:

- All the parameters are scalar values or no parameters.

-

Type 2:

- All the parameters are arrays.

-

Type 3:

- First parameter is scalar, rest of the parameters are arrays.

-

Type 4:

- Last parameter is scalar, rest of the parameters are arrays.

-

Type 5:

- Any other type(Default).

Each of these categories, except Type 5, uses similar code template for different types of

values. Note that due to the three address code-like structure of Tame IR, any call to a builtin

almost always contains zero, one or two arguments. For builtin calls like horzcat

and vertcat which may contain variable number of arguments, MIX10 packs all

the arguments in a Rail and passes a single argument of type Rail. Accordingly,

these builtins are implemented in Type 2 category and receive a single argument of type

Rail.

We build an XML file that contains the method bodies for each category for every builtin

method (that we support). The XML also contains specialized implementations of every builtin

for different kinds of arrays. We implement the following strategy to select and generate the

correct and required methods. First, we make a pass through the AST to make a list of all the

builtin methods used in the source MATLAB program. Next, we parse the XML file

once and read in the X10 code templates for all the categories of the builtin methods

collected in the first step. Next, whenever a call to a builtin method is made, based on the

results of the value analysis we: (1) Identify the required specialization for the method

(simple array or region array); and (2) generate the correct method header and select

the corresponding builtin template in the required specialization for that method. The

generated methods are finally written to a X10 class file named Mix10.x10. In the

code generated for actual MATLAB program, the call to a builtin method is simply

replaced by a call to the corresponding method in the Mix10 class. For example, MATLAB

expression plus(a,b) is translated to X10 expression Mix10.plus(a,b). Appendix A

demonstrates the structure of the builtin XML with an example implementation of the builtin

plus.

Using the above approach not only improves the readability of the generated code, but it also

allows for future extensibility, better maintenance and more specialization. Specializations that we

plan to add in future are: (1) the ability to use the Global Matrix Library for the available methods

in it and whenever the data size is large enough; and (2) concurrent versions of the relevant

builtins to support the execution of vector instructions in parallel fashion, as described in

section 7.3. We also encourage advanced users to mdoify the generated Mix10.x10 file

to enhance or add builtin implementations for higher performance of the generated

code.

Chapter 6

Code Generation for the Sequential Core

___________________________________________________________________________________

MATLAB is a programming language designed specifically for numerical computations. Every

value is a Matrix and has an associated array shape. Even scalar values are 1×1 matrices. Vectors

are 1×n or n×1 matrices. All the values are by default of type double. MATLAB naturally

supports imaginary components for all numerical values and almost all operators and library

functions support complex inputs. In the rest of this chapter we describe some of the key features

of MATLAB that demonstrate what makes MATLAB different and challenging to compile

statically and techniques used by MIX10 to translate these “wild" features to X10. We provide

necessary details on the various MATLAB constructs as we discuss them, however for the readers

who are totally unfamiliar with MATLAB, we suggest reading chapter 2 of the M.Sc. thesis on the

Tamer framework [Dub12].

6.1 Methods

A function definition in MATLAB takes one or more input arguments and returns one or more

values. A typical MATLAB function looks as follows:

function[x,y] = foo(a,b) x = a+3; y = b-3; end

This function has two input arguments a and b that can be of any type and any shape and

returns two values x and y of the same shape as a and b respectively and of types determined by

MATLAB’s type conversion rules. The Tamer IR provides a list of input arguments and a list of

return values for a function. The interprocedural value analysis identifies the types, shapes

and whether they are complex numerical values for all the arguments and the return

values.

MATLAB functions are mapped to X10 methods. If it is the entry function, the type of the

input argument is specified by the user (Tame IR requires to have an entry function or a driver

function with one argument. This function may call other functions with any number of input

arguments). For other functions the parameter types are computed by the value analysis

performed by the Tamer on the Tame IR. The type information computed includes the type of the

value, its shape and whether it is a complex value. Other statements in the function block are

processed recursively and corresponding nodes are created in the X10 IR. Finally, if there are

any return values, as determined by the Tame IR, a return statement is inserted in the

X10 IR at the end of the method. If the function returns only one value, say x then the

inserted statement is simply return x; but if the function returns more than one

values (which is quite common in MATLAB) then we return a Rail of type Any whose

elements are the values that are returned. So, for the above example the return statement is

return [x as Any, y as Any];. Note that the use of short syntactic form for Rail

construction improves the readability of the generated code. Below is the generated code for the

simple example above.

static def foo(a: Double, b: Double){ var mc_t0: Double = 3.3; var x: Double = Mix10.plus(a, mc_t0); var mc_t1: Double = 3.2; var y: Double = Mix10.minus(b, mc_t1); return [x as Any, y as Any]; }

Also note that the variables mc_t0 and mc_t1 are introduced by Tamer in the Tame

IR.

6.2 Types, Assignments and Declarations

MATLAB provides following basic types:

- double, single: floating point values

- uint8, uint16, uint32, uint64: unsigned integer values

- int8, int16, in32, int64: integer values

- logical: boolean values

- char: character values (strings are vectors of char)

These basic types are naturally mapped to X10 base types as follows. Floating point values

are mapped to Double and Float respectively, unsigned integers are mapped to

UByte, UShort, UInt and ULong, integer values are mapped to Byte, Short, Int

and Long, logical is mapped to Boolean and char is mapped to Char (vector of chars is

mapped to String type). If the shape of an identifier of type T is greater than 1×1 it is mapped

to Array[T]. The type conversion rules are quite different from standard languages. For

example, an operation involving a double and an int32 results in a value of type

int32.The type rules are explained in detail in the Tamer documents, www.sable.mcgill.ca/mclab/tamer.html.

MIX10 inserts an explicit typecast wherever required.

All the MATLAB operators are designed to work on matrix values and are provided as

syntactic sugar to the corresponding builtin methods that take operands as arguments. Operators

are overloaded to support different semantics for 1×1 matrices (scalar values). MATLAB provides

two types of operators - matrix operators and array operators. Matrix operators work on whole

matrix values. These include matrix multiplication (*) and matrix division (\, /). Array operators

always operate in an element-wise manner. For example array multiply operator .* performs

element-wise multiplication. As described in Chapter 5, MIX10 implements all operators as

builtins.

MATLAB is a dynamically typed language which means that variables need not be declared

and take up any value that they are assigned to. X10 however, is statically typed and requires

variables to be declared before being assigned to. MIX10 maintains a list of all the declared

variables. It starts with an empty list. Whenever an identifier appears in an assignment statement

on LHS, if it is not already present in the list, a declaration statement is added to the X10 IR and

the variable (with its associated type and value information) is added to the list, followed by an

assignment statement corresponding to the statement in MATLAB. If the identifier is

already present in the list, the assignment statement is added to the X10 IR and the

associated type and value information is updated. In case the MATLAB assignment

statement is inside a loop and needs a declaration, the declaration statement (without any

assignment) is added to the method block outside any loop or conditional scope and

the assignment statement is added in the scope where it is present in MATLAB code.

If the identifier on LHS is an array, then the declaration creates a new array with the

shape corresponding to the shape of the RHS array. For example a MATLAB statement

like a=b; where shape of a is, say, 3×3 and type is double will be translated to

a:Array_2[Double]=new Array_2[Double](b); for simple arrays and

a:Array[Double]=new Array[Double](b); for region arrays (outside the scope of

any loops or conditionals).

6.3 Loops

Loops in MATLAB are fairly intuitive except for one semantic difference from most of the

languages. In a for loop if the loop index variable is redefined inside the body of the loop, then

its new value is persistent only in a particular iteration and does not affect the number of loop

iterations. For example, consider the following listing.

function [x] = forTest1(a) for i = (1:10) i=3; a=a+i; end x=a; end

Note that inside every iteration, the value of loop index variable i is 3 but the loop still

terminates after ten iterations. The above code would be translated to the following X10

code:

static def forTest1 (a: Double) { //Note that in the actual generated code //the name of the temporary variable introduced by MiX10 //is mangled to ensure that there is no //conflict with any other variable name. var mc_t0: Long = 1; var mc_t1: Long = 10; var i_x10: Long; var b: Double; var i: Long; for (i_x10 = mc_t0; (i_x10 <= mc_t1); i_x10 = (i_x10 + 1)) { i = i_x10; i = 3 ; b = Mix10.plus(a, i) ; } var x: Double = a; return x; }

To handle this somewhat different semantics we introduce a new loop index variable and

assign it to the original loop index variable at the beginning of the loop body. The rest of the loop

body is translated by standard rules. Note that the new loop index variable is introduced only if

the actual loop index variable is redefined inside the loop body.

6.4 Conditionals

In MATLAB conditionals are expressed using the if-elseif-else construct and do not have any wild

semantics. MATLAB also allows switch statements which are converted to equivalent if-else

statements by the Tamer. It also recursively converts a statement like if (B1) S1 elseif

(B2) S2 else S3 to a series of if-else clauses like if (B1) S1 else{ if(B2)

S2 else S3}. This if-else construct is intuitively mapped to the if-else construct in

X10.

6.5 Function Calls

Function calls in MATLAB are similar to other programming languages if the called function

returns nothing or returns only one value. However, MATLAB allows a function to

return multiple values. Whenever a call is made to such a function, returned values are

received in a list in the order specified by function definition. For example in the statement

[x,n] = bubble(a); a call is made to the function bubble which returns two values that

are read into x and n respectively. This statement is compiled to following code in

X10.

//Note that in the actual generated code //the name of the temporary variable introduced by MiX10 //is mangled to ensure that there is no //conflict with any other variable name. var x: Double; var n: Double; val _x_n: Rail[Any]; _x_n = bubble(A) ; x = _x_n(0) as Double ; n = _x_n(1) as Double ;

The key idea here is to create a Rail of type Any and read the returned value. Remember that

MIX10 packs the multiple return values of a method in a Rail of type Any and returns it.

Individual elements of the list simply read the values from this array. If the function call is inside a

loop, all the declarations are moved out of the loop and only assignments are inside the

loop.

6.6 Cell Arrays